Scraping Big Lots Stores Location

So, what is the easiest way to get CSV file of all the Big lots store locations data in the USA?

Buy the Big Lots store data from our data store

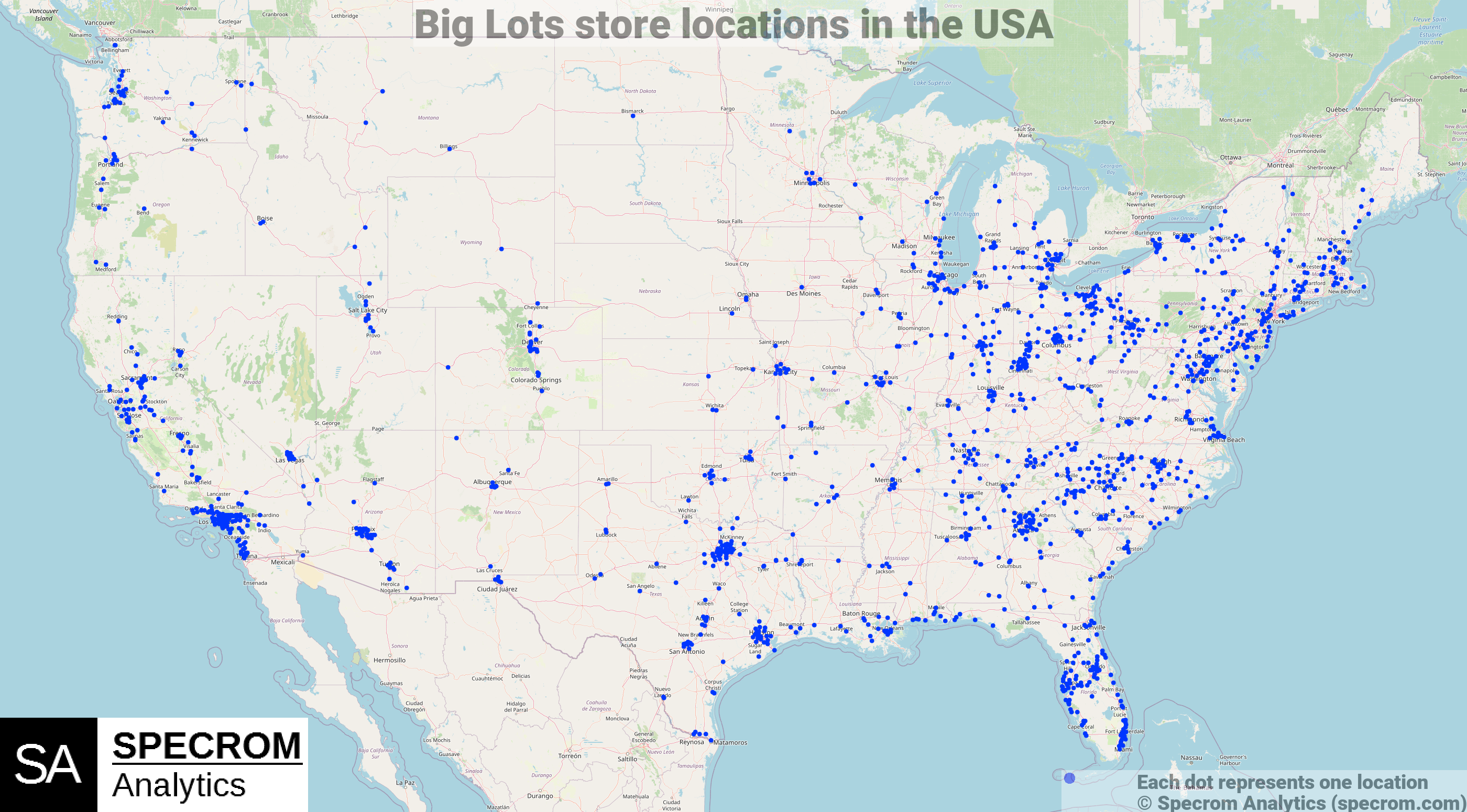

There are over 1200 Big Lots stores in USA and you buy the CSV file containing address, city, zip, latitude, longitude of each location in our data store for $50.

Figure 1: Big lots store locations. Source: Big lots Store Locations dataset

If you are instead interested in scraping for locations on your own than continue reading rest of the article.

Scraping Big lots stores locator using Python

We will keep things simple for now and try to web scrape Big lots store locations for only one zipcode.

Python is great for web scraping and we will be using a library called Selenium to extract Big Lots store locator’s raw html source for zipcode 30301 (Atlanta, GA).

- Fetching raw html page from the Big lots store locator page for individual zip codes or cities in USA

### Using Selenium to extract Big lots store locator's raw html source

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import Select

import time

from bs4 import BeautifulSoup

import numpy as np

import pandas as pd

test_url = 'https://local.biglots.com/search?q=30301&qp=30301&l=en'

option = webdriver.ChromeOptions()

option.add_argument("--incognito")

chromedriver = r'chromedriver_path'

browser = webdriver.Chrome(chromedriver, options=option)

browser.get(test_url)

time.sleep(5)

html_source = browser.page_source

browser.close()

Using BeautifulSoup to extract Big lots store details

Once we have the raw html source, we should use a Python library called BeautifulSoup for parsing the raw html files.

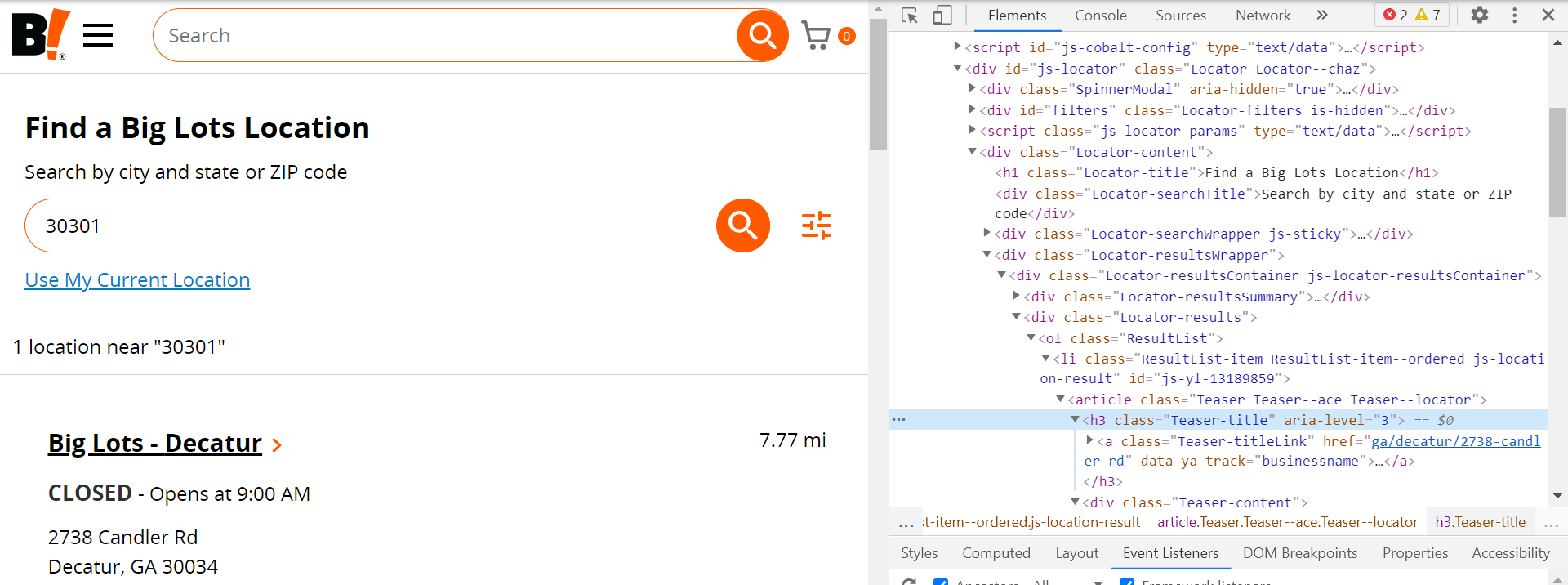

- You should open the page in the chrome browser and click inspect.

Figure 2: Inspecting the source of Big lots store locator.

We will extract store names.

The data will still require some cleaning to extract out store names, address line 1,address line 2, city, state, zipcode and phone numbers but thats just basic Python string manipulation and we will leave that as an exercise to the reader.

# extracting Big Lots store names

soup=BeautifulSoup(html_source, "html.parser")

store_name_list_src = soup.find_all('h3', {'class','Teaser-title'})

store_name_list = []

for val in store_name_list_src:

try:

store_name_list.append(val.get_text())

except:

pass

store_name_list

#Output

['Big Lots - Decatur']

The next step is extracting addresses. Referring back to the inspect in the chrome browser, we see that each address text is in fact of the class name c-address so we just use the BeautifulSoup find_all method to extract that into a list.

# extracting Big lots store addresses

addresses_src = soup.find_all('address',{'class', 'c-address'})

addresses_src

address_list = []

for val in addresses_src:

address_list.append(val.get_text())

address_list

# Output

['2738 Candler Rd Decatur, GA 30034 US']

We will extract store services using a similar approach.

# extracting store services for each big lots store

local_services_src = soup.find_all('div', {'class', 'Teaser-services'})

local_services_list = []

for val in phone_number_src:

local_services_list.append(val.get_text())

local_services_list

# Output

['Store Services: Full Furniture with Mattresses, Furniture Leasing, Furniture Delivery, Fresh Dairy & Frozen Foods, SNAP/EBT']

Each local store location has its own URL where additional information such as store closing times, phone numbers etc. is available.

# extracting store urls

store_url_list = []

for val in store_name_list_src:

try:

store_url_list.append('https://local.biglots.com/'+val.find('a')['href'])

except:

pass

#Output

store_url_list

['https://local.biglots.com/ga/decatur/2738-candler-rd']

Geo-encoding

You will need latitudes and longitudes of each stores if you want to plot it on map like figure 1.

Lats and longs are also necessary to calculate distances between points, driving radius etc. all of which are important part of location analysis.

We recommend that you use a robust geocoding service like Google maps to convert the address into coordinates (latitudes and longitudes). It costs $5 for 1000 addresses but in our view its totally worth it.

There are some free alternatives for geocoding based on openstreetmaps but none that matches the accuracy of Google maps.

In the example below, we have used Openstreetmaps based geo-encoder API called Nominatim.

from geopy.geocoders import Nominatim

nom = Nominatim(user_agent="my-application")

location = nom.geocode('Decatur, GA 30034')

location

# Output

Location(Decatur, DeKalb County, Georgia, United States, (33.7737582, -84.296069, 0.0))

Scaling up to a full crawler for extracting all Big Lots store locations in USA

Once you have the above scraper that can extract data for one zipcode/city, you will have to iterate through all the US zip codes.

it depends on how much coverage you want, but for a national chain like Big Lots you are looking at running the above function 100,000 times or more to ensure that no region is left out.

Once you scale up to make thousands of requests, the Biglots.com servers will start blocking your IP address outright or you will be flagged and will start getting CAPTCHA.

To make it more likely to successfully fetch data for all USA, you will have to implement:

- rotating proxy IP addresses preferably using residential proxies.

- rotate user agents

- Use an external CAPTCHA solving service like 2captcha or anticaptcha.com

After you follow all the steps above, you will realize that our pricing($50) for web scraped store locations data for all Big lots stores location is one of the most competitive in the market.