Amazon Web Services (AWS)

What is cloud computing?

Cloud computing is the on-demand availability of computer system resources such as data storage and computing power without direct active management by the user.

Cloud computing is the powering all the well known Internet apps of today including Airbnb and Netflix. Almost all of the monetization ideas can be implemented by using a combination of cloud computing services.

If you are our current text analytics API customer, then the analysis you get through our APIs is being done on the cloud servers.

One of the biggest cloud computing providers in the market currently is Amazon web services (AWS), and an indicative list of AWS customers are:

- Netflix

- Siemens

- BBC

- S&P Global

- Shell

- T Mobile

A full list of their current customers are on the AWS case studies site.

List of AWS products

As of December, 2019; AWS markets over 170 products belonging to following subcategories:

Compute: AWS elastic cloud compute (EC2), AWS lambda, AWS Elastic load balancing, AWS Elastic Beanstalk

Security, Identity & Compliance: AWS Identity and Access Management (IAM), Amazon Cognito, AWS Key Management Service

Database: Amazon DocumentDB, Amazon DynamoDB, Amazon Neptune, Amazon Aurora, Amazon Relational Database Service (RDS)

Analytics: Amazon Athena, Amazon Elasticsearch Service, Amazon Redshift, AWS Glue, Amazon Elastic MapReduce

Storage: Amazon Glacier, Amazon S3, Amazon Elastic Block Store (EBS)

Machine Learning: Amazon SageMaker, Amazon Comprehend, Amazon Personalize, Amazon Lex, Amazon Rekognition, Amazon Textract, Amazon Polly, Amazon Transcribe

Management Tools: AWS Management Console, Amazon CloudWatch, AWS CloudFormation, AWS CloudTrail

Migration: AWS Server Migration Service

Messaging: Amazon Simple Email Service (Amazon SES), Amazon Simple Notification Service (SNS)

Developer Tools: AWS CodeDeploy, AWS CodeBuild

Application Services: Amazon API Gateway

Networking & Content Delivery: Amazon CloudFront

Internet of Things: Amazon FreeRTos, AWS IoT Analytics

As you can see, AWS has products which cover almost all aspects of serving content over the internet to your customer.

For brevity, we will focus our attention on most important products (AWS Management Console, EC2, Lambda, S3, Cloudwatch, Cloudtrail and IAM) which should give you an idea on compute, data storage, management and security, identity & compliance capabilities of AWS.

How to interact with AWS products?

There are four main ways to interact with AWS products:

AWS management console: It’s a web based management application with an user friendly interface and allows you to control and manipulate a wide variety of AWS resources. We will use this as our main way to interact with AWS.

AWS command line interface (CLI): This is a downloadable tool which allows your to control all aspects of AWS with your computer’s command line interface. Since this operates from command line, you can easily automate any or all aspects through scripts.

AWS SDKs: AWS already has software development kits (SDKs) for programming languages such as Java, Python,Ruby, C++ etc. You can easily use these to tightly integrate AWS services such as machine learning, databases or storage (S3) with your application.

AWS CloudFormation: AWS CloudFormation allows you to use programming languages or a simple text file to model and provision, in an automated and secure manner, all the resources needed for your applications across all regions and accounts. This is frequently referred to as “Infrastructure as Code”, which can be broadly defined as using programming languages to control infrastructure.

All the four methods above have pros and cons with respect to usability, maintainability, robustness etc and is a lively debate on stackoverflow and Reddit on which approach is the best.

Amazon Simple Storage Service (Amazon S3)

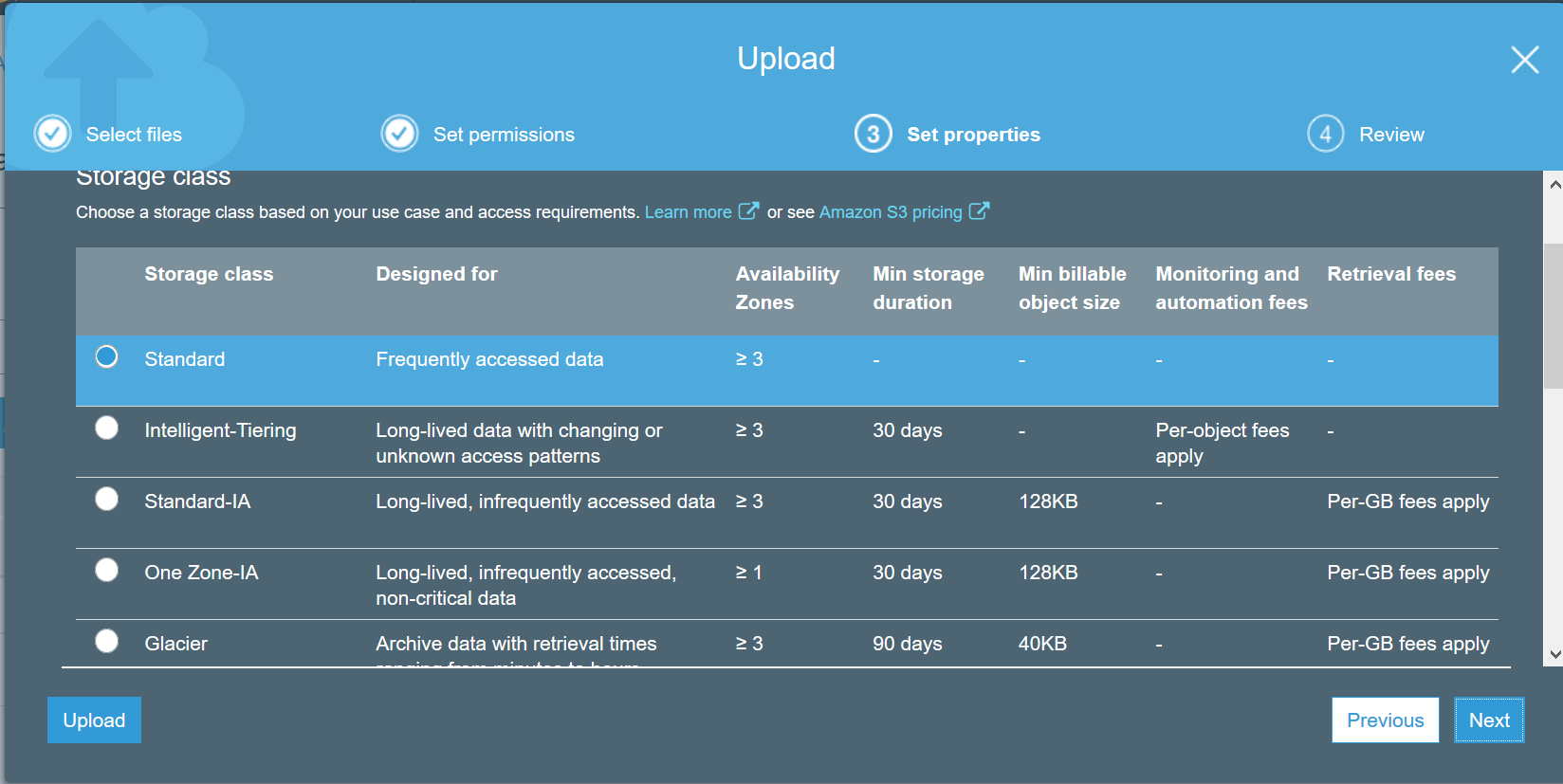

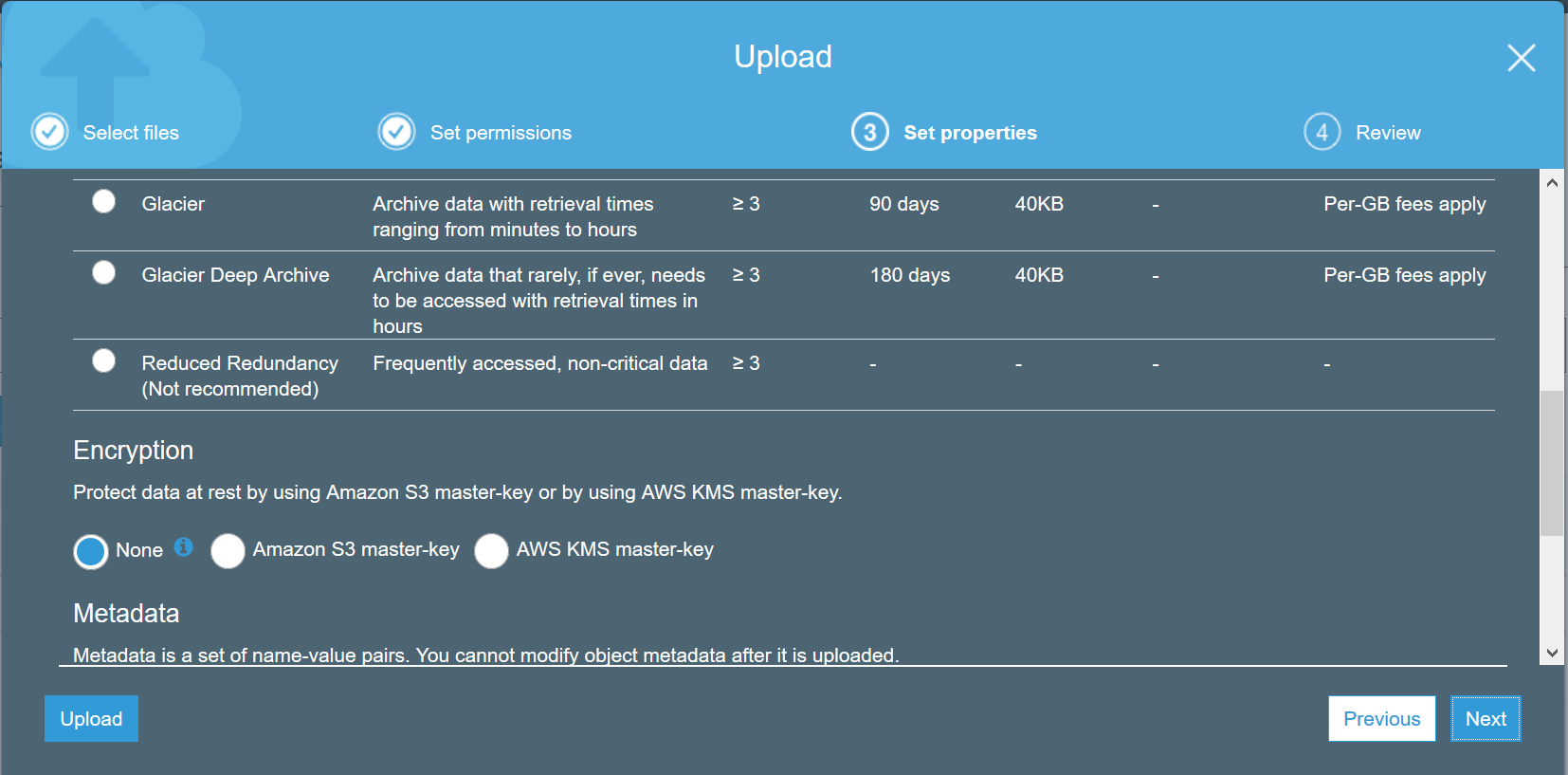

Amazon S3 is a web service that lets you store and retrieve data organized as objects and aims to provide scalability, high availability, and low latency with 99.999999999% durability depending on the type of S3 storage level. S3 objects are stored in constructs called buckets which are specific to the region.

S3 has different pricing tiers available which charge an user based on how frequently its accessed (read/write), how long the data is stored and amount of data used.

- S3 Standard is used for frequently accessed data

- S3 Intelligent tiering lets you store data with unknown or changing access patterns

- S3 Glacier or S3 Glacier Deep Archive uses infrequently accessed data such as backups

AWS Identity and Access Management (IAM)

IAM allows you to securely control AWS resources. When you register for AWS, the user id you create is called a root user which has most extensive permissions available within IAM.

AWS recommends that you should refrain from using root user id for everyday tasks, and instead use it only to create your first IAM user.

Let us break down IAM into its components:

IAM user: is used to grant AWS access to other people.

IAM group: grant same level of access to multiple people.

IAM role: grant access to other AWS resources. for example, allow EC2 server to access S3 bucket.

IAM policy: used to define the granular level permissions for IAM user, IAM group, or IAM role.

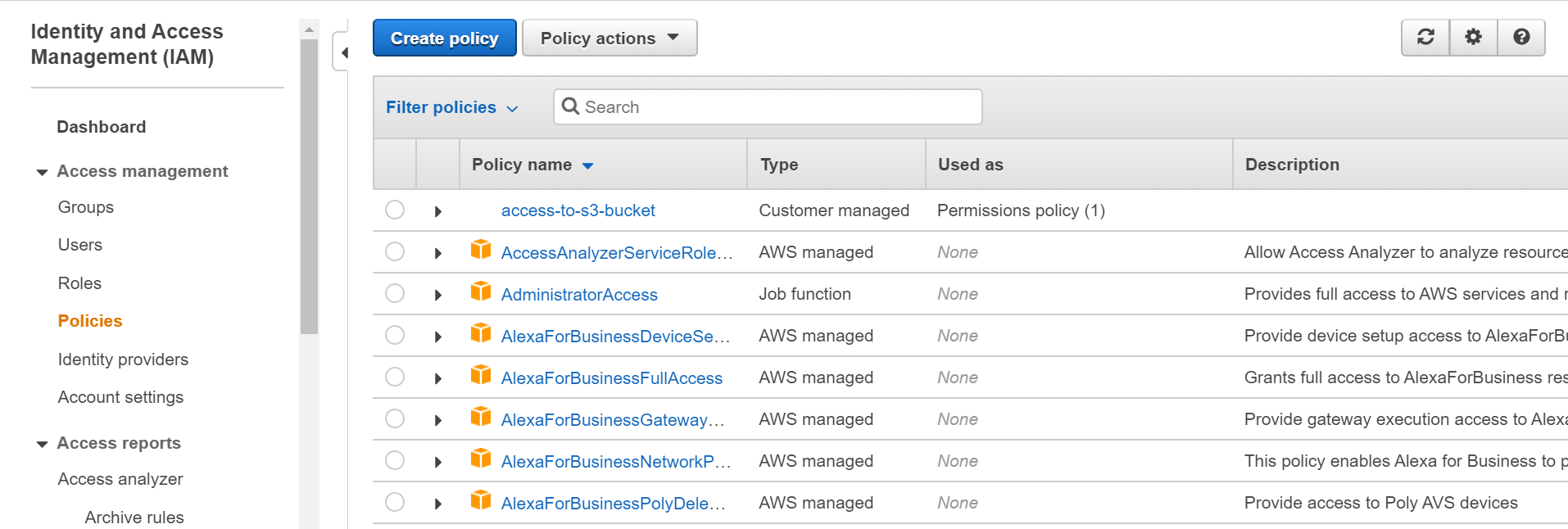

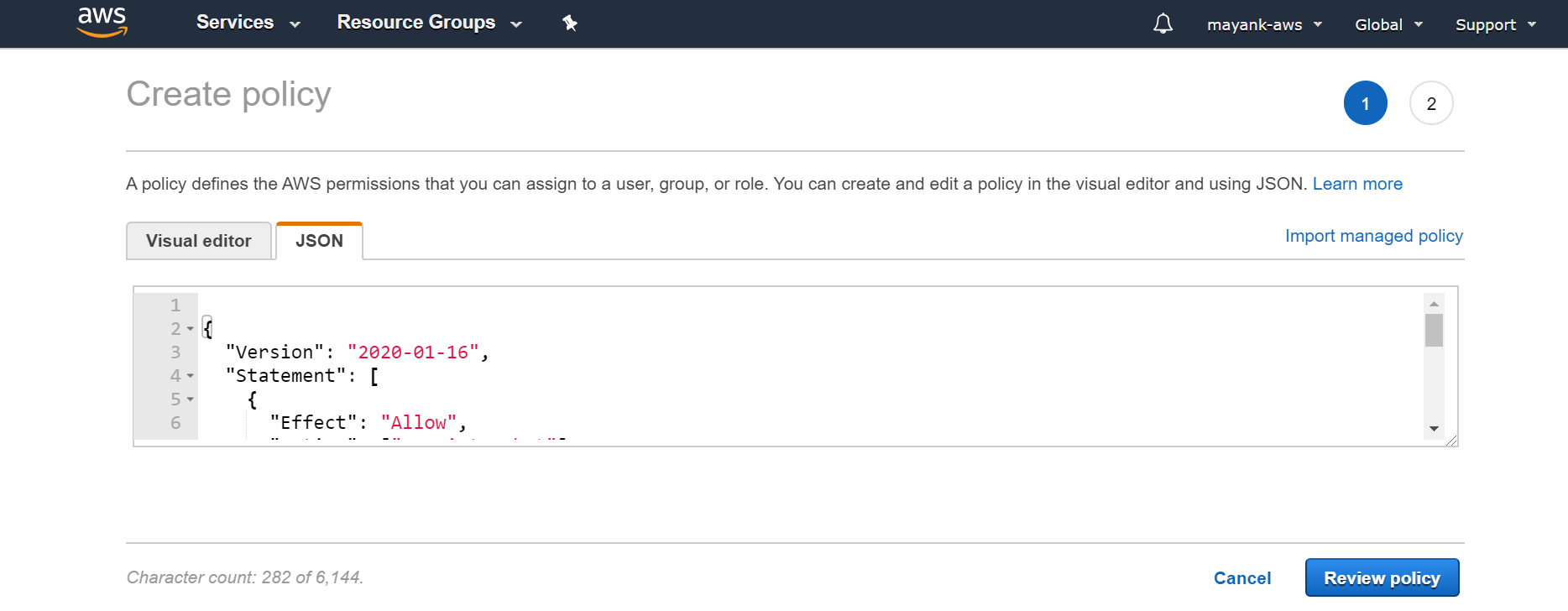

Setting a new IAM policy

Let us set IAM policy and IAM role for a simple scenario where our EC2 server has to access data from a S3 bucket.

- Go to IAM console

- Click policy copy-paste the contents of the text file “access-to-s3-bucket.txt” in the JSON text box.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": ["s3:ListBucket"],

"Resource": ["arn:aws:s3:::ec2-testing-for-s3-permissions"]

},

{

"Effect": "Allow",

"Action": [

"s3:PutObject",

"s3:GetObject",

"s3:DeleteObject"

],

"Resource": ["arn:aws:s3:::ec2-testing-for-s3-permissions/*"]

}

]

}

This permission basically allows specific access to the EC2 instance so that it can: - open the specific S3 bucket - read files from that S3 bucket - write file access to that S3 bucket so that the EC2 instance can upload files to it.

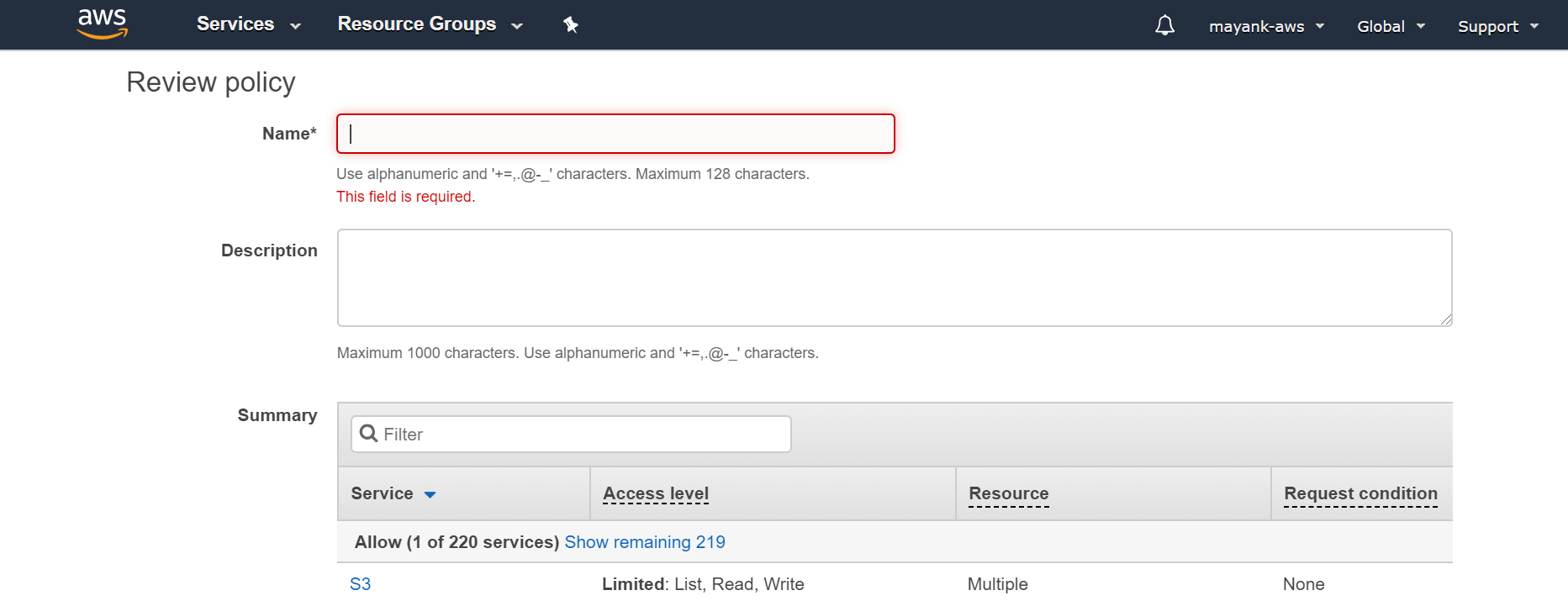

- In the next screen just name this policy “access-to-s3-bucket” and put description of your choice

Creating a new IAM role

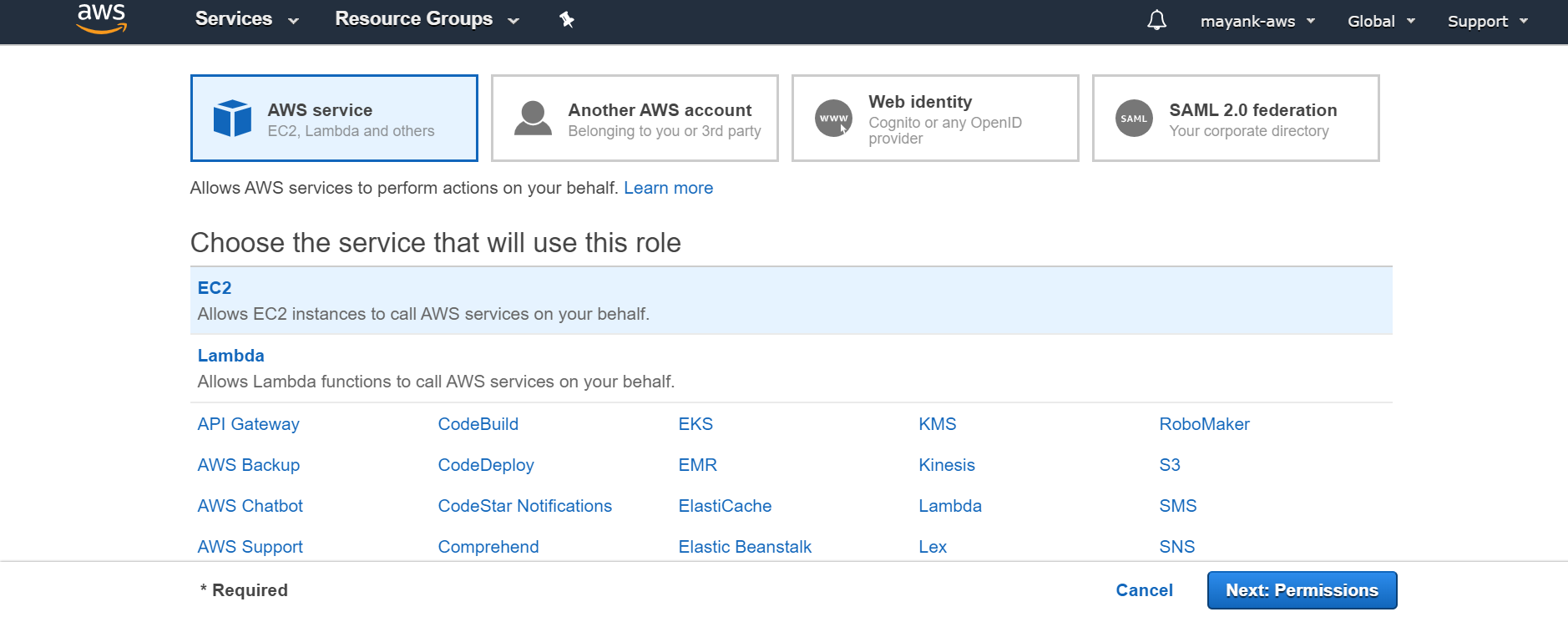

Click on “create role” button to start creating a new role.

Select AWS service, and click on EC2 and click Next: permissions

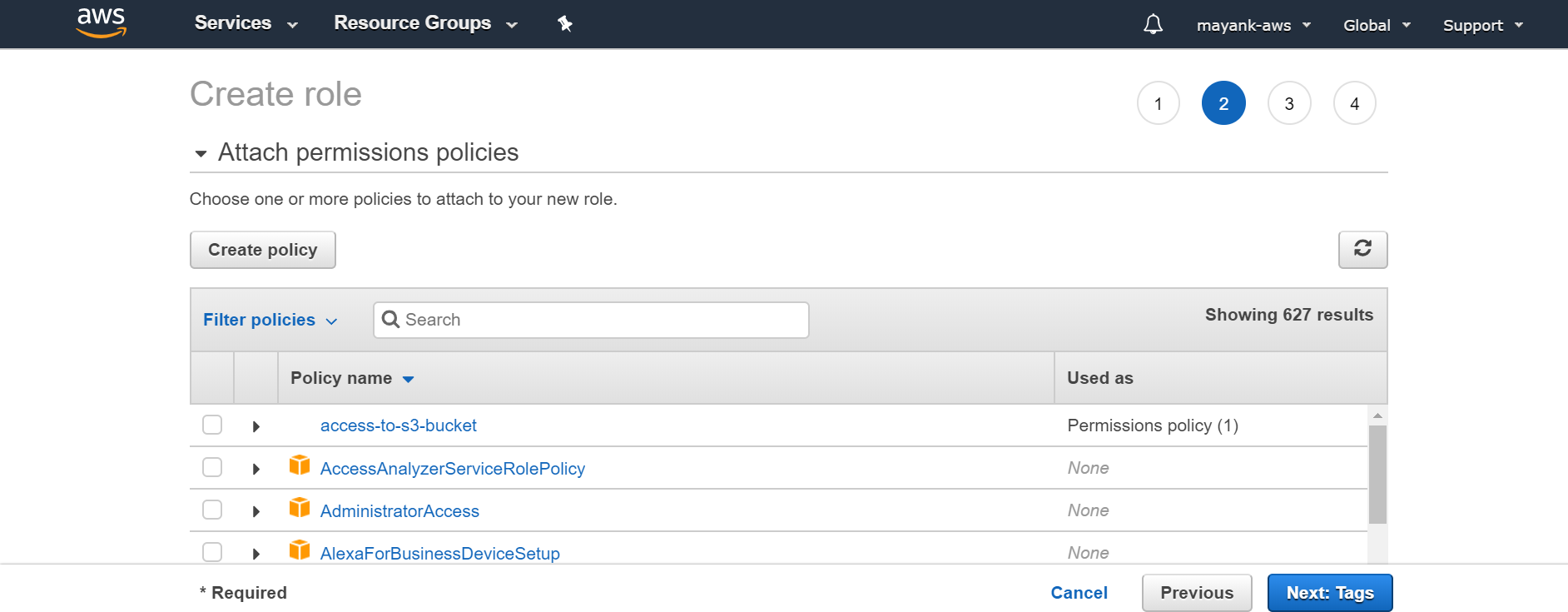

- select the policy you just created in above section. If you cant find it immediately, then juct click filter policies and select customer managed. click next.

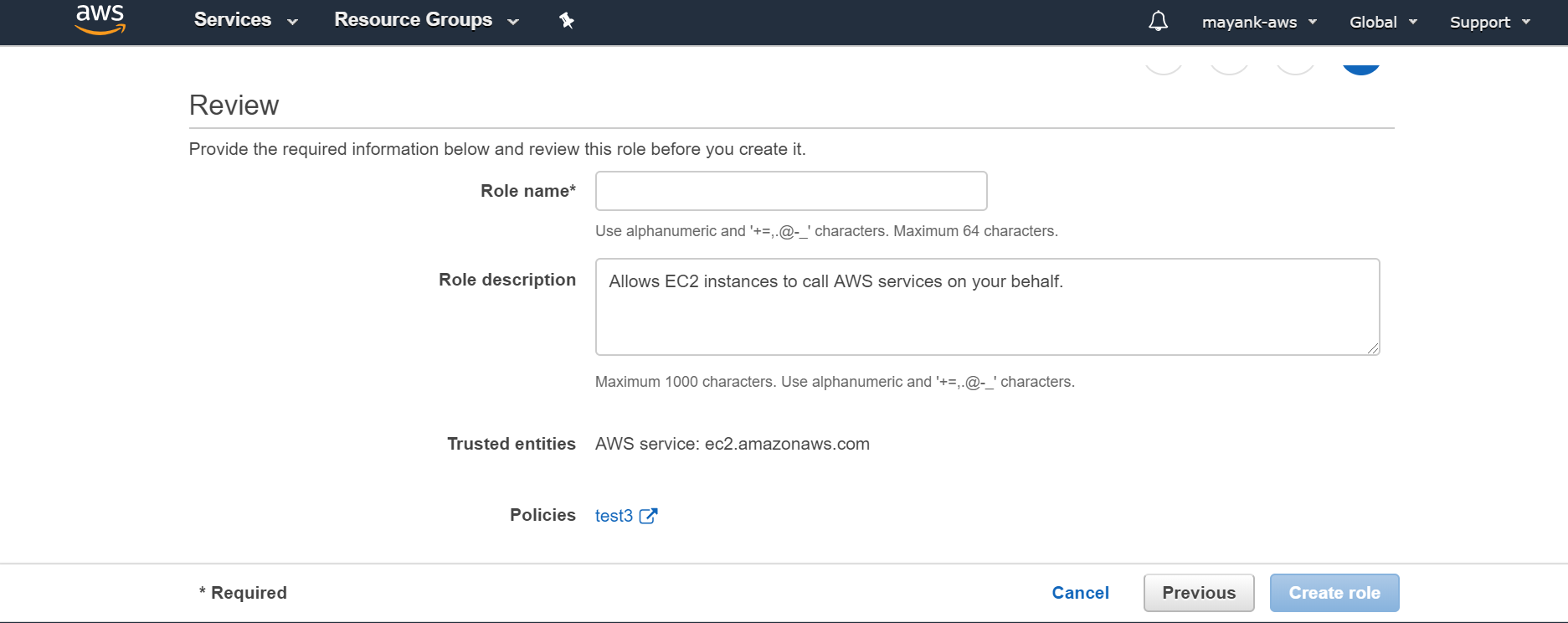

- Finally, on the last page just name the role as “ ec2_to_s3”

Amazon Elastic Compute Cloud (Amazon EC2)

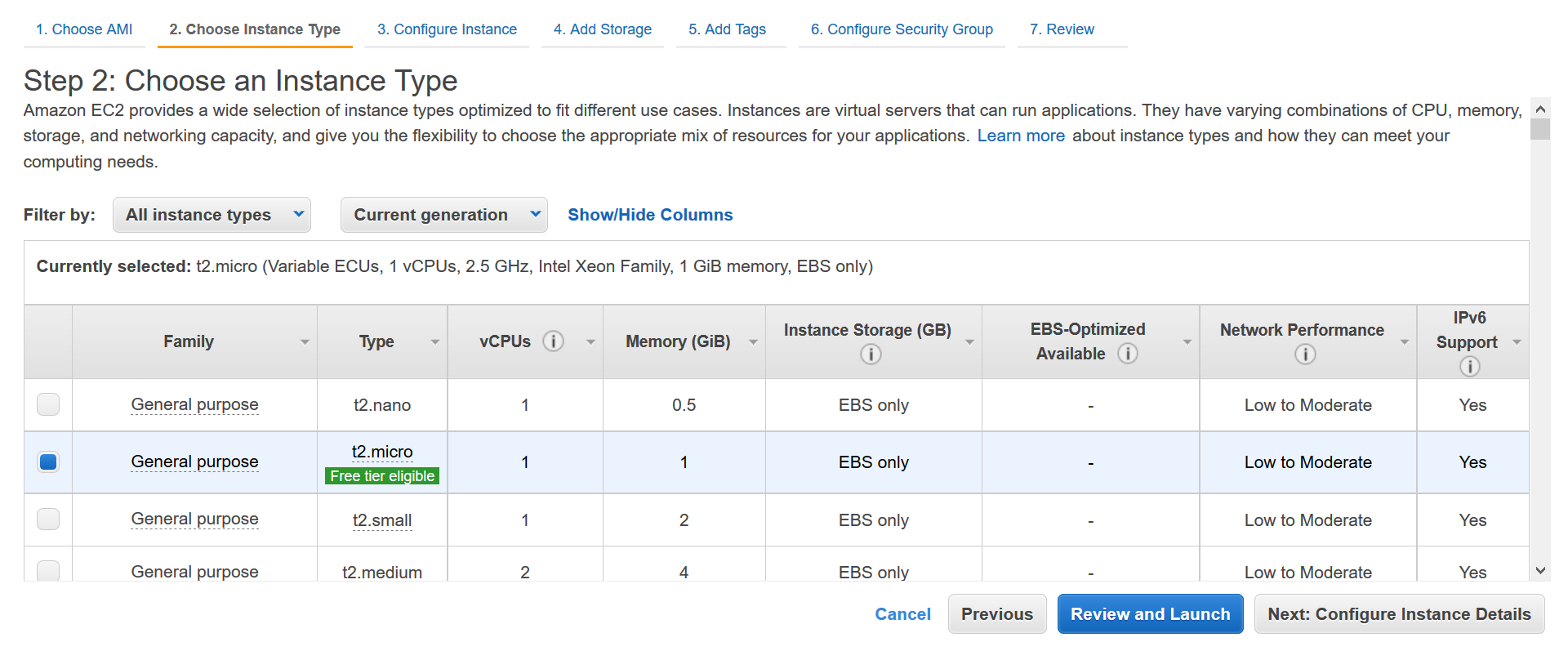

Amazon Elastic Compute Cloud (Amazon EC2) is a web service that provides scalable compute capacity in the cloud. Basically, you can start and stop a server in a geographical area of your choice with desired configurations and can either pay by the second or can reserve it for longer duration.

From a technical standpoint, EC2 has wide variety of servers. from high RAM instances to high CPU cores, and in past couple of years, they also have GPU instances which are predominantly used for training deep learning models.

An AWS EC2 instance refers to a particular server operated from a specific geographical area.

For pricing standpoint, all the servers are available at three distinct pricepoints:

the most expensive option is typically “On-Demand instances”, where you pay for compute capacity by the hour or the second depending on instances you run. You can spin up a new server or shut if down based on the demands of your application and only pay the specified per hourly rates for the instance you use.

The second option is called “reserved instances”; here you are reserving a particular server for 1⁄3 years and you get a discount of as much as 75% compared to if you ran your on demand server for 1 year or 24*365 hours.

The third option is something known as “spot instances”. These are available in offpeak hours and available to be run in increments of 1 hour upto 6 hours. There is also a discount over the price of on-demand instances.

AWS also charges differently for different servers located in different geographical area. The cheapest are ones located in US east (N. Virginia) and Ohio and we always use those locations as default.

Note: There are some good pros in using reserved and spot instances, and indeed my startup uses that as 65-70% of times; you may end up paying more overall if you didn’t benchmark your code well, and thought you need a server for 750 hours when in reality you only needed it for 300-400 hours and rest of the hours were wasted. In that scenario, you’ll save money by paying by the second (even if its more per second) and shut off the server when work is complete.

EC2 Server types

EC2 has very tiny servers for small loads called t2.nano ones which only cost $0.0058 per Hour (or $5/month) and has one cpu core and 512 MB ram; there are other general purpose servers in these category which are more powerful than nano which can host all kinds of high traffic websites or rest API endpoints from trained models and these costs a lot less than what PAAS companies like heruku charge.

On the other end of the spectrum they have high CPU/compute servers such as ‘c5n.18xlarge’ which costs about $3.888 per Hour and has 72 cores and 192 GB ram. We mainly use these high compute servers for training machine learning models which are CPU based like tf-idf etc.

For training deep learning models in tensorflow/pytorch etc for word embeddings we also have GPU instances such as p3.16xlarge which costs about $24/hour and has Nvidia GPUs for fast computations.

If in doubt, you should use the smallest compute optimized server they have which is c5.large ($0.085 per Hour) and go up as needed. At Specrom Analytics, we get all our analysts comfortable in c5.large before going for cn5 ones.

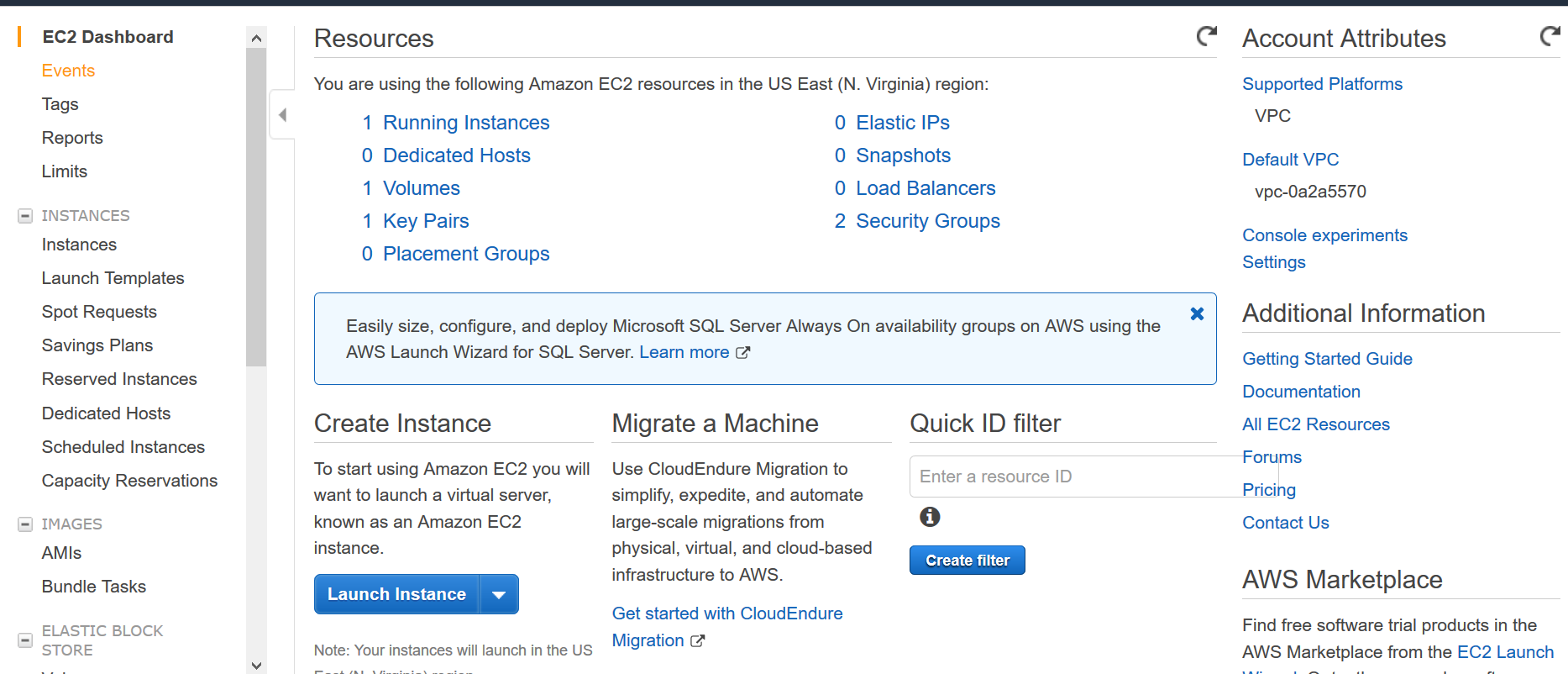

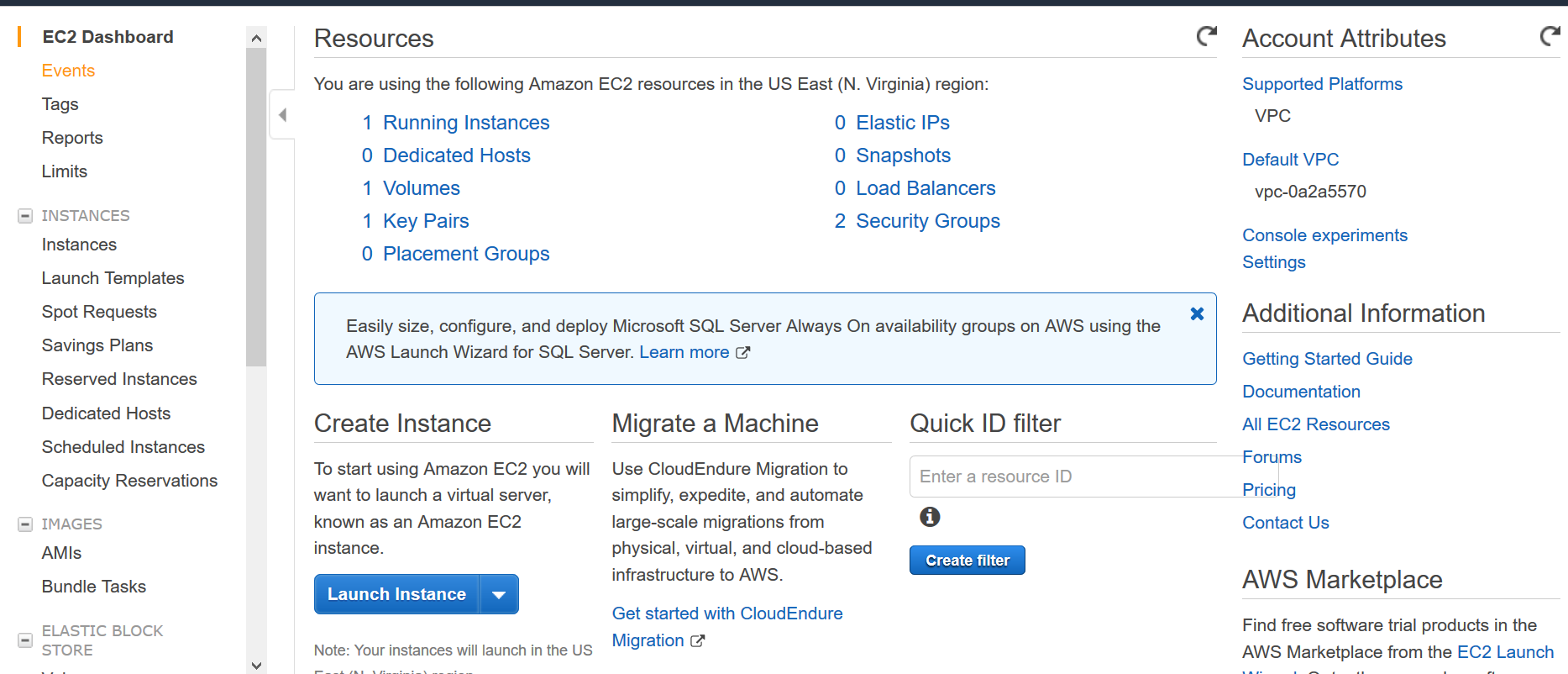

Spinning your first server

Login to your AWS

So you can choose plain vanilla server with only OS installed; but lets make things easier, and select a instance from the marketplace which has anaconda distribution preinstalled.

Go to this site: https://aws.amazon.com/marketplace/pp/B07CNFWMPC?ref=cns_srchrow

hit continue to subscribe from the top right corner.

hit continue on the page where it asked for python version and region

On the next page, hit launch through ec2 page

continue next through add storage (increase it to 30 GB from default 8 GB) and add tags

when you click launch, it should tell you to create a key pair. This step is important; create one key and save it on your computer. You will not be able to communicate with your server without this key.

keep clicking next until it says your instance is launching.

Now to go AWS dashboard from left corner, and pick ec2 dashboard

click the running instance link and go to more details.

save the public dns url; you will need this to communicate with server using SSH

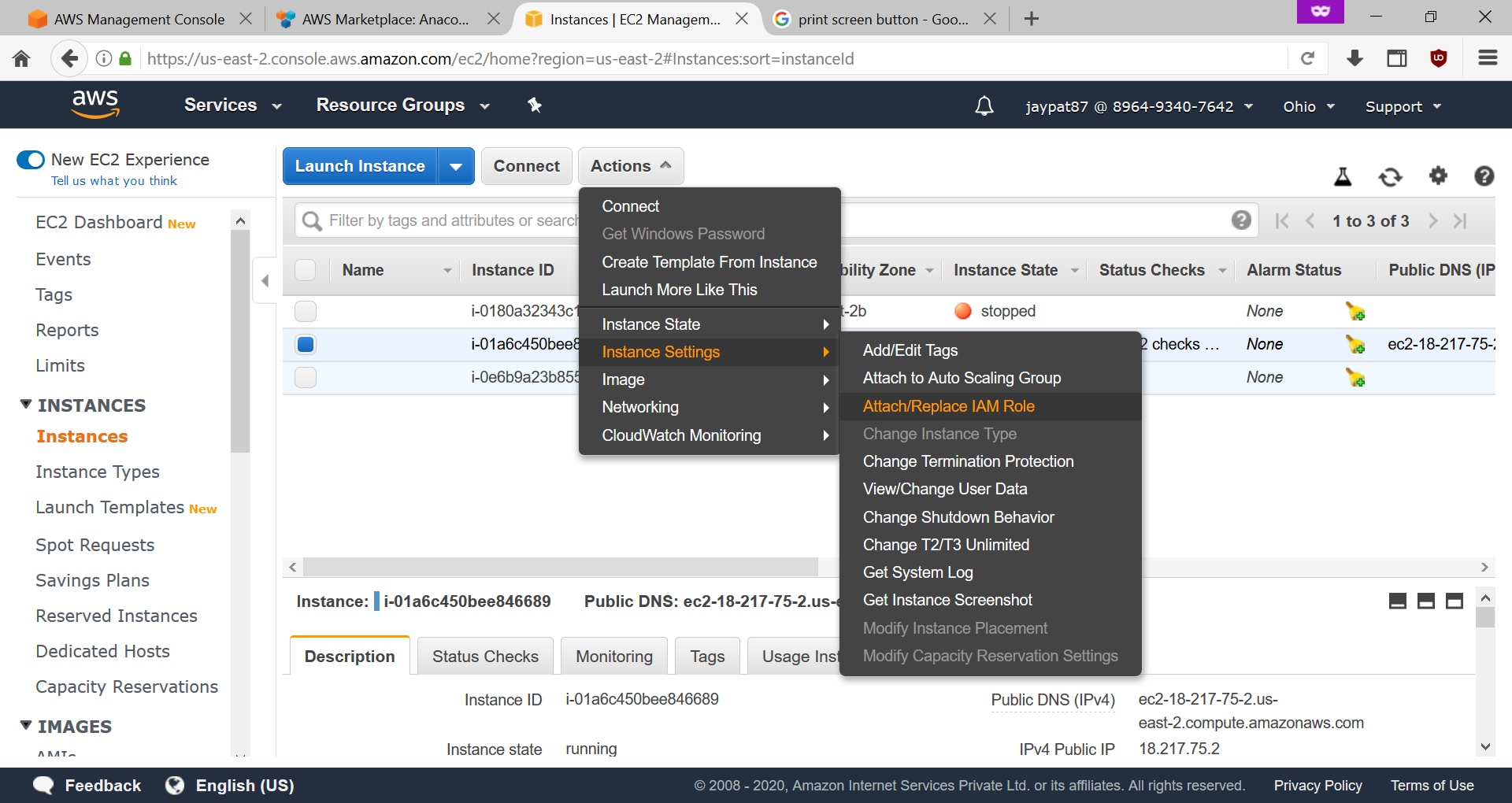

You can assign a IAM role to this EC2 server by clicking on Actions > Instance Settings > Attach/Replace IAM role

Communicating with your EC2 server using SSH

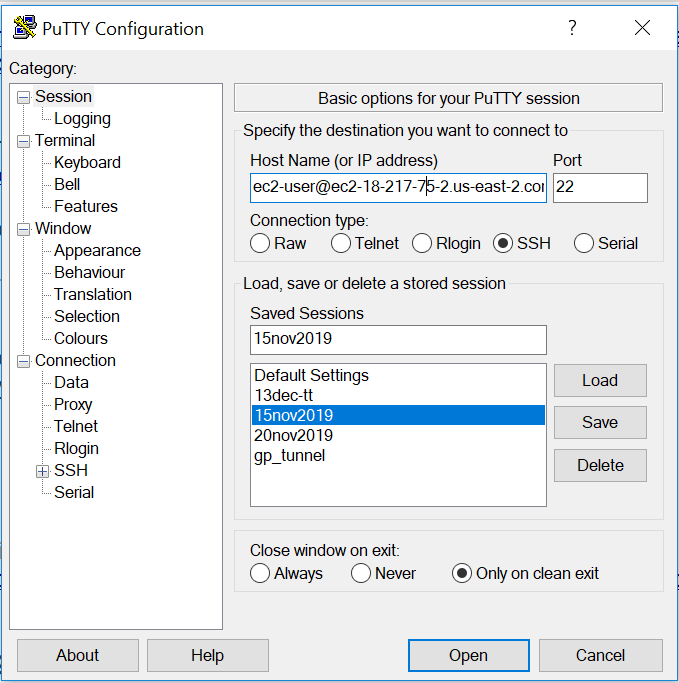

We are assuming that you have your server ready to go; so now lets try to talk with it from your local computer. If you are using windows, just go out and download putty (https://www.putty.org/)

Remember the key file you downloaded from AWS? well that was in .pem format, but putty only wants keys in .ppk format; to convert .pem into .ppk download puttygen (https://www.puttygen.com/). once you load .pem file in puttygen, click on save private key and a .ppk file will be downloaded. Use this ppk file in putty in the next step.

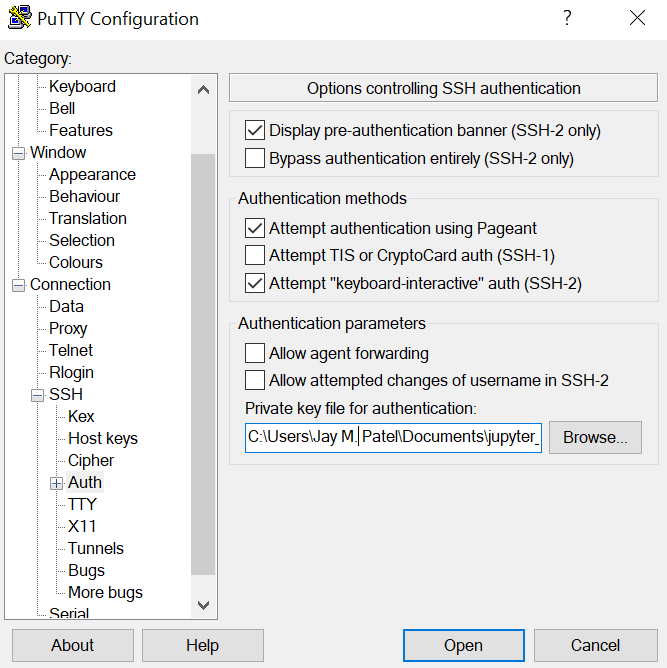

Next, go to SSH > Auth and select the location of the .ppk key file .

- Copy the public DNS IP address you got from EC2 dashboard to the Host name field in putty. Dont forget to append username which is “EC2-user” followed by @ before IP address. Click open to get connected to your EC2 server.